An ai robot is no longer a distant idea reserved for science fiction; it is a practical machine that senses its environment, interprets data, and takes actions with a level of autonomy guided by artificial intelligence. Unlike traditional industrial machines that repeat fixed motions, an intelligent robot can adapt when conditions change. That adaptability may come from computer vision that recognizes objects, machine learning models that improve through experience, natural language processing that interprets speech, or planning algorithms that choose the best next step. The result is a system that can operate in messier, more human spaces: warehouses with variable inventory, hospitals with unpredictable workflows, farms with changing weather, and homes with cluttered rooms and pets underfoot.

Table of Contents

- My Personal Experience

- Understanding the Modern AI Robot: From Concept to Everyday Reality

- How an AI Robot Thinks: Sensing, Perception, and Decision-Making

- Core Hardware Components: Sensors, Actuators, Power, and Compute

- Software Architecture: From Robotics Middleware to Learning Models

- Types of AI Robot Systems: Service, Industrial, Medical, Consumer, and Humanoid

- Real-World Applications: Where AI Robot Deployments Deliver Measurable Value

- Human-Robot Interaction: Speech, Gesture, Trust, and Collaboration

- Expert Insight

- Safety, Reliability, and Compliance: Making AI Robots Fit for the Physical World

- Ethics and Privacy: Data, Surveillance Concerns, and Responsible Deployment

- Business Impact and ROI: Costs, Integration, and Operational Change

- Limitations and Challenges: Why AI Robots Still Struggle with “Simple” Tasks

- The Future of AI Robot Development: Multimodal Models, Edge AI, and Smarter Autonomy

- Choosing and Using an AI Robot: Practical Considerations for Buyers and Operators

- Frequently Asked Questions

My Personal Experience

Last month at work, our team started using a small AI robot to help with inventory checks in the back room. I expected something flashy, but it was mostly practical—rolling down the aisles, scanning barcodes, and flagging gaps on a tablet. The first day it kept stopping in the same spot because a pallet was slightly over the line, and I caught myself apologizing to it like it was a coworker. After a week, I realized how much time it saved me from the most repetitive part of my shift, but it also made me pay closer attention to the tasks that actually needed judgment, like deciding what to reorder and what to mark down. It wasn’t perfect, and it definitely didn’t “feel” human, yet I still felt a weird mix of relief and unease watching it quietly do a job I used to spend hours on.

Understanding the Modern AI Robot: From Concept to Everyday Reality

An ai robot is no longer a distant idea reserved for science fiction; it is a practical machine that senses its environment, interprets data, and takes actions with a level of autonomy guided by artificial intelligence. Unlike traditional industrial machines that repeat fixed motions, an intelligent robot can adapt when conditions change. That adaptability may come from computer vision that recognizes objects, machine learning models that improve through experience, natural language processing that interprets speech, or planning algorithms that choose the best next step. The result is a system that can operate in messier, more human spaces: warehouses with variable inventory, hospitals with unpredictable workflows, farms with changing weather, and homes with cluttered rooms and pets underfoot.

It helps to separate the “robot” part from the “AI” part. The robot is the physical body: wheels, legs, arms, grippers, sensors, batteries, and motors. The AI is the brain: perception, reasoning, and decision-making that turns raw sensor readings into meaningful actions. Many systems marketed as robotic are mostly automated machines with minimal intelligence, while some AI systems are purely software with no physical form. The most capable devices blend both: robust mechanics plus a reliable intelligence stack. When people search for an ai robot, they often mean a machine that can safely share space with humans, learn tasks faster than traditional programming, and handle variability without constant reconfiguration. That expectation shapes the entire field—driving progress in safety, dexterous manipulation, efficient computing at the edge, and the ethics of deploying autonomous systems in public and private settings.

How an AI Robot Thinks: Sensing, Perception, and Decision-Making

Every ai robot begins with sensing. Cameras capture color and depth, lidar measures distance, microphones pick up speech and ambient sound, force sensors detect contact, and inertial sensors estimate orientation and acceleration. These inputs are noisy, incomplete, and sometimes contradictory. The intelligence layer must fuse them into a coherent view of the world. Perception models identify people, tools, obstacles, and relevant landmarks. In a warehouse, perception might detect barcodes and pallet edges; in a hospital, it might recognize hallway boundaries and human gestures; in a home, it may distinguish a sock from a toy. Modern perception often relies on deep learning, but it still needs calibration, lighting tolerance, and strategies for handling uncertainty when the camera is blocked or the microphone captures overlapping voices.

After perception comes decision-making. The system estimates what is happening now, predicts what may happen next, and chooses an action that aligns with a goal—such as delivering medication, picking items, or navigating to a charging dock. Planning can be reactive (avoid obstacle immediately) or deliberative (choose an efficient route through multiple rooms). Reinforcement learning can help an ai robot discover strategies through trial and error in simulation, while classical control ensures smooth motion and stability. A crucial detail is that intelligence is rarely a single model; it is a stack of modules that must work together under tight timing constraints. The robot also needs “common sense” constraints: do not collide with humans, do not exceed joint limits, and stop when contact forces exceed safe thresholds. The most successful designs treat intelligence as a system, not a magic model, balancing learning-based components with reliable engineered safeguards.

Core Hardware Components: Sensors, Actuators, Power, and Compute

The physical design of an ai robot determines what it can realistically do. Mobility might come from wheels for efficiency on flat surfaces, tracks for stability on uneven ground, or legs for stairs and rough terrain. Manipulation depends on arms with sufficient reach, torque, and precision, and grippers designed for the objects at hand. A suction cup may outperform a complex hand for boxes, while a multi-finger gripper may be needed for delicate items like fruit or medical supplies. Sensors are equally defining: depth cameras help with object geometry, tactile sensors help detect slip, and force-torque sensors enable safe interaction and compliant motion. Many real-world tasks fail not because AI is “not smart enough,” but because the robot lacks the sensing or mechanical compliance to handle variability.

Compute and power are the hidden constraints. Running neural networks for vision and language can be computationally expensive, and an ai robot often needs to do that while also controlling motors at high frequency. Edge GPUs, specialized AI accelerators, and efficient CPUs allow onboard inference without relying on constant cloud connectivity. Battery capacity influences shift length, payload, and speed; thermal design influences whether compute can run at full performance without overheating. Connectivity still matters for updates, fleet management, and optional cloud-based tasks, but robust autonomy requires that the robot remain safe and functional even when Wi-Fi drops. Engineering teams often spend as much effort on wiring, safety circuits, protective housings, and maintainability as they do on algorithms, because real deployments demand durability, easy servicing, and predictable performance over thousands of operating hours.

Software Architecture: From Robotics Middleware to Learning Models

Under the hood, an ai robot typically runs a layered software architecture. At the bottom are real-time controllers that handle motor commands, sensor sampling, and safety interlocks. Above that are localization and mapping modules that estimate the robot’s position, build a map, and keep navigation stable as environments change. Middleware such as ROS and ROS 2 (and proprietary equivalents) coordinates messaging between components, enabling modular development and easier integration of drivers, planners, and perception nodes. This structure matters because robotics is inherently multidisciplinary: mechanical, electrical, and software teams must align, and the system must be testable. Well-structured logs, replay tools, and simulation environments allow teams to reproduce issues and validate fixes before rolling them out to a fleet.

Learning models add new capabilities but also new responsibilities. When a perception model is updated, it can improve detection in one lighting condition and regress in another. When a policy learned in simulation is transferred to reality, small differences in friction and sensor noise can cause unexpected behavior. A reliable ai robot therefore uses rigorous evaluation: dataset curation, scenario-based testing, and monitoring in production. Many deployments include “shadow mode” evaluation where new models run in parallel without controlling the robot, allowing comparison against the current model. Safety layers also remain independent from learned components, ensuring that even if a neural network misclassifies an object, collision avoidance and emergency stop logic still prevent dangerous motion. The best software stacks treat AI as a powerful tool inside a disciplined engineering process, not as a replacement for it.

Types of AI Robot Systems: Service, Industrial, Medical, Consumer, and Humanoid

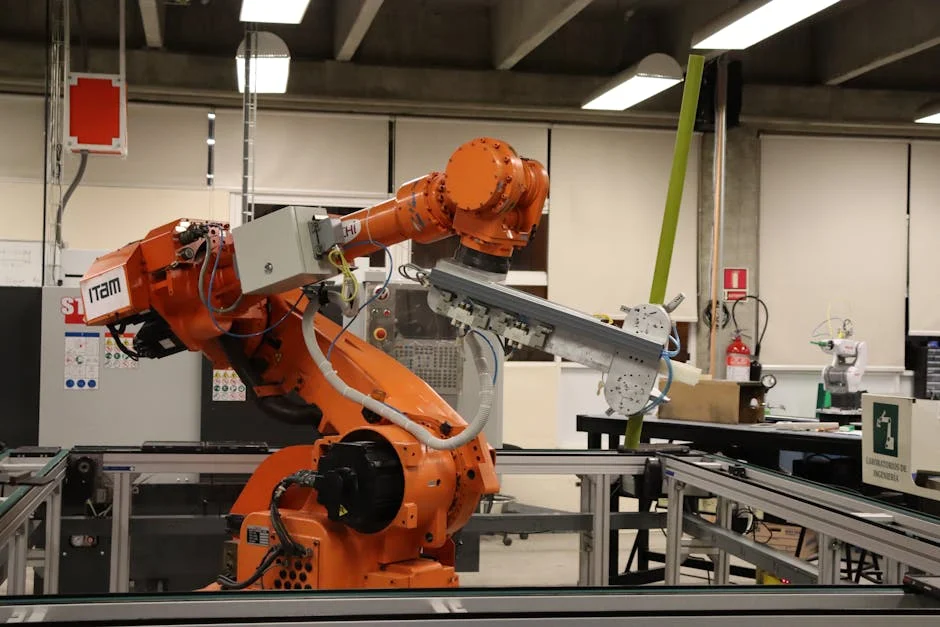

The term ai robot covers a wide range of machines. Industrial robots have long existed, but the modern shift is toward flexibility: collaborative robots that work near people, vision-guided picking systems, and mobile manipulators that move between workstations. Service robots include autonomous floor cleaners, delivery robots in hospitals and hotels, and inspection robots for infrastructure. Consumer robotics spans vacuuming, lawn mowing, window cleaning, and companion devices designed to interact socially. Each category has different constraints: factories prioritize throughput and uptime, hospitals prioritize safety and hygiene, and consumer devices prioritize affordability and ease of use.

Humanoid and legged robots receive significant attention because they promise to operate in human-built environments without special infrastructure. However, making a humanoid ai robot useful is difficult: balancing is complex, batteries drain quickly, and dexterous hands are challenging to build and control. Even so, progress in actuators, lightweight materials, and whole-body control is making these platforms more practical. Meanwhile, specialized non-humanoid designs often deliver immediate value: a compact robot with a lift mechanism may outperform a humanoid at moving bins in a warehouse. The most sensible approach is to choose a form factor that matches the job, then add intelligence that improves robustness, learning speed, and safe collaboration. The market is increasingly segmented, with success coming to systems that solve specific problems reliably rather than attempting to be a general-purpose machine too early.

Real-World Applications: Where AI Robot Deployments Deliver Measurable Value

In logistics and fulfillment, an ai robot can reduce walking time, increase pick rates, and improve inventory accuracy. Autonomous mobile robots move racks or totes to human pickers, while vision-guided arms pick items directly. The variability of SKUs, packaging, and lighting makes AI-driven perception essential. In manufacturing, flexible automation helps small and medium businesses handle short production runs without expensive retooling. Robots equipped with force sensing and adaptive control can insert parts, perform sanding, or handle assembly tasks with less rigid fixturing. The economic value comes from consistent output, reduced injuries, and the ability to scale operations without proportional labor increases.

In healthcare, the promise of an ai robot is not to replace clinicians but to remove friction from workflows. Delivery robots move linens, lab samples, and meals; disinfection robots support infection control; telepresence robots allow remote specialists to check in with patients. Surgical robotics, while not always “AI” in the popular sense, increasingly incorporates intelligent assistance such as image guidance and motion stabilization. On farms, autonomous machines can monitor crops, target weeds with precision spraying, and harvest produce with less waste. In energy and infrastructure, robots inspect pipelines, wind turbines, and power lines, reducing risk to human workers. Across these sectors, the strongest use cases share a pattern: the robot handles repetitive or hazardous tasks, while humans focus on judgment, empathy, and complex exception handling.

Human-Robot Interaction: Speech, Gesture, Trust, and Collaboration

For an ai robot to be accepted in shared spaces, it must communicate intent clearly. People need to know whether the machine has seen them, what it plans to do next, and how to intervene if something looks wrong. Interaction design includes lights, sounds, screen prompts, and motion cues like slowing down near pedestrians. Natural language interfaces can help, but they must be reliable and context-aware; a robot that misunderstands instructions creates frustration and safety risk. Gesture recognition and simple button-based controls often remain important because they are predictable. In workplaces, training materials and clear escalation paths help employees feel comfortable, especially when robots operate nearby.

| Type | Best for | Key strengths |

|---|---|---|

| Industrial AI Robot | High-volume manufacturing and precision automation | Speed, repeatability, quality control, predictive maintenance |

| Service AI Robot | Customer-facing tasks in retail, hospitality, healthcare support | Natural interaction, navigation in human spaces, task scheduling |

| Home/Personal AI Robot | Everyday assistance and smart-home routines | Voice control, personalization, monitoring, simple household tasks |

Expert Insight

Define a single, measurable job for the robot (e.g., “sort 200 items/hour with <1% errors”) and map the workflow end-to-end before deployment; then run a short pilot in a controlled area to confirm speed, accuracy, and safety assumptions. If you’re looking for ai robot, this is your best choice.

Build reliability into daily operations by setting clear handoff rules for edge cases, scheduling routine calibration and cleaning, and tracking a simple checklist of uptime, error types, and near-misses to guide quick process improvements. If you’re looking for ai robot, this is your best choice.

Trust is earned through consistent behavior. An ai robot that occasionally makes surprising moves will quickly lose acceptance, even if it is statistically “safe.” That is why many systems prioritize smooth trajectories, conservative speed limits near humans, and explicit yielding behavior. Collaboration also requires understanding social norms: do not block doorways, do not approach too closely from behind, and do not interrupt conversations by rolling between people. In some settings, the robot must handle privacy expectations, such as avoiding unnecessary video recording in patient areas. The best interaction design treats the robot as a coworker that follows rules, signals intentions, and respects boundaries. This is as much a product design challenge as it is an AI challenge, and it often determines whether a deployment succeeds beyond the pilot phase.

Safety, Reliability, and Compliance: Making AI Robots Fit for the Physical World

Because an ai robot moves in the real world, safety is not optional. Safety engineering includes emergency stop circuits, speed and separation monitoring, collision detection, and redundant sensors where needed. Collaborative robots often have force limits to reduce injury risk, and mobile robots use lidar and vision to maintain safe distances. Beyond physical safety, reliability matters: a robot that frequently needs human rescue or remote intervention can erase productivity gains. Reliability is built through robust mechanical design, fault detection, graceful degradation, and clear maintenance procedures. Seemingly small details—like protecting sensors from dust, choosing connectors that do not loosen under vibration, and designing wheels that handle floor transitions—can make or break uptime.

Compliance and standards vary by region and application, but most serious deployments align with established frameworks for machinery safety, functional safety, and workplace requirements. An ai robot used in public spaces may need additional certifications related to electrical safety, radio emissions, and accessibility. In regulated industries like healthcare, data handling and sanitation protocols add more constraints. AI introduces additional risk: models can behave unpredictably outside their training distribution. To manage that, teams implement conservative constraints, extensive testing, and monitoring to detect drift. Many organizations also create operational policies: where the robot can drive, when it must be escorted, and how incidents are reported. The practical goal is not theoretical perfection; it is a measurable reduction in risk and a clear accountability chain so that safety is continuously improved rather than assumed.

Ethics and Privacy: Data, Surveillance Concerns, and Responsible Deployment

An ai robot often collects data by necessity: video for navigation, depth maps for obstacle avoidance, logs for debugging, and sometimes audio for voice commands. That creates understandable concern about surveillance, especially in homes, hospitals, and workplaces. Responsible deployment starts with data minimization—collect only what is needed—and transparency about what is recorded, stored, and shared. On-device processing can reduce exposure by avoiding cloud uploads for routine perception tasks. When cloud services are used, encryption, access controls, and retention limits are essential. Some designs include physical shutters for cameras or clear indicators when recording is active, giving people a visible signal that supports informed consent.

Ethical questions extend beyond privacy. If an ai robot influences work allocation, it can affect job quality and worker autonomy. If it operates in public, it must avoid biased behaviors, such as failing to detect certain body types or mobility aids due to unrepresentative training data. There is also the issue of accountability when something goes wrong: the manufacturer, the integrator, and the operator each have responsibilities. Responsible robotics programs typically include bias testing for perception, clear incident reporting, and user-centered design that accounts for vulnerable populations. The most sustainable path is to treat ethics as a design constraint from the start rather than a marketing add-on. That approach builds trust, reduces legal risk, and creates systems that fit into real human environments without eroding the sense of safety people expect in their daily lives.

Business Impact and ROI: Costs, Integration, and Operational Change

Buying an ai robot is rarely just a hardware purchase; it is an operational change. The total cost includes deployment planning, site mapping, network upgrades, safety reviews, staff training, and ongoing maintenance. Integration can involve warehouse management systems, elevator controls, door access, or manufacturing execution systems. The strongest return on investment appears when a process is stable enough to be automated but still costly in labor or risk. For example, moving materials across a large facility is simple but time-consuming; autonomous transport can reclaim hours per shift. In other cases, the value is in quality: inspection robots can detect defects consistently, reducing rework and warranty claims.

Operationally, organizations must decide how humans and an ai robot share responsibility. Who responds when the robot gets stuck? How are exceptions handled? What metrics define success—throughput, safety incidents, uptime, or customer satisfaction? Many deployments succeed by starting with a narrow scope and expanding once reliability is proven. Fleet management tools help track battery health, mission completion rates, and hotspot locations where robots frequently encounter issues. Some vendors offer robots-as-a-service pricing, converting capital expense into operating expense and bundling support. Regardless of the contract model, sustainable ROI comes from aligning the robot’s capabilities with a process redesign, not from expecting the machine to fit perfectly into an unchanged workflow. When people, processes, and technology are aligned, intelligent robotics can deliver compounding gains over time.

Limitations and Challenges: Why AI Robots Still Struggle with “Simple” Tasks

Even a sophisticated ai robot can struggle with tasks humans consider trivial, like picking up a transparent object, opening a tight drawer, or navigating a crowded hallway where people behave unpredictably. The physical world is full of edge cases: reflective surfaces confuse depth sensors, clutter hides important features, and objects vary in texture and weight. Dexterous manipulation remains especially hard because it requires precise perception, fine motor control, and tactile feedback. Many robots can grasp known objects in controlled settings, yet fail when items are slightly deformed, slippery, or partially occluded. This gap between lab demos and real operations explains why successful deployments often focus on constrained tasks with clear boundaries.

Generalization is another hurdle. A model trained for one site may not transfer perfectly to another due to different lighting, floor patterns, or signage. A mobile ai robot that navigates well in a warehouse might struggle in a hospital with reflective floors and moving beds. Language interfaces can also be brittle: accents, background noise, and ambiguous phrasing cause misinterpretation. Additionally, long-term autonomy is difficult because environments change—furniture moves, inventory shifts, and construction alters pathways. The most robust systems incorporate continuous mapping updates, anomaly detection, and human-in-the-loop tools for quick corrections. These limitations do not mean robotics is failing; they show that building embodied intelligence is fundamentally harder than building software-only AI. Progress is steady, but practical success depends on choosing use cases with tolerable variability and designing for graceful handling of the unexpected.

The Future of AI Robot Development: Multimodal Models, Edge AI, and Smarter Autonomy

The next generation of ai robot systems is being shaped by multimodal AI—models that combine vision, language, and action. Instead of programming every behavior, developers can use demonstration data, natural language instructions, and simulation to teach broader skills. This can reduce integration time and allow robots to adapt faster to new tasks. At the same time, there is a strong push toward edge AI, where more inference happens on the robot for lower latency, higher reliability, and better privacy. As chips become more efficient, robots can run larger models without sacrificing battery life. Better toolchains for deploying and updating models will also matter, enabling safer rollouts and faster iteration across fleets.

Hardware advances will amplify software gains. Improved actuators with better torque control, more sensitive tactile skins, and lighter materials will make an ai robot safer and more capable around people. Standardized interfaces for grippers, sensors, and charging can lower costs and speed up adoption. On the autonomy side, expect more robust navigation in dynamic environments, better understanding of human intent, and richer interaction through speech and gesture—paired with stricter safety constraints and clearer transparency. The most impactful future systems may not look like a single do-everything humanoid; they may be coordinated teams of specialized robots managed through unified software, each optimized for a subset of tasks. As these systems mature, the focus will increasingly shift from novelty to reliability, compliance, and measurable benefits in everyday operations.

Choosing and Using an AI Robot: Practical Considerations for Buyers and Operators

Selecting an ai robot starts with the job, not the gadget. A clear task definition should include environment details, payload, duty cycle, safety requirements, and the acceptable rate of human intervention. For navigation robots, evaluate floor conditions, ramps, door thresholds, and elevator needs. For manipulation, evaluate object diversity, lighting, and required precision. Ask for evidence of performance in similar conditions, not just controlled demos. Pilot programs should include success metrics like uptime, mission completion, and time saved, along with a plan for handling exceptions. It is also wise to evaluate vendor support, spare parts availability, and the maturity of monitoring tools, because long-term operations depend on fast troubleshooting and predictable maintenance.

Once deployed, an ai robot benefits from operational discipline. Keep maps updated, maintain clear pathways, and establish simple rules for human coworkers—such as not parking carts in designated robot lanes. Train staff on safe interaction, emergency stops, and reporting issues with enough detail to reproduce them. Monitor performance trends to catch early signs of degradation, such as increased localization failures or shorter battery runtime. Update models and software cautiously, with staged rollouts and rollback options. Over time, organizations that treat robotics as a living system—one that needs continuous improvement—tend to see the best results. With realistic expectations, careful matching of capability to use case, and strong safety and privacy practices, an ai robot can become a dependable part of daily work rather than an experiment that never leaves the pilot stage.

Summary

In summary, “ai robot” is a crucial topic that deserves thoughtful consideration. We hope this article has provided you with a comprehensive understanding to help you make better decisions.

Frequently Asked Questions

What is an AI robot?

An AI robot is a physical machine that uses artificial intelligence to perceive its environment, make decisions, and act autonomously or semi-autonomously.

How is an AI robot different from a regular robot?

Regular robots often follow fixed rules or scripts, while AI robots can adapt using techniques like machine learning, computer vision, and natural-language processing.

What are common uses of AI robots today?

They’re used in manufacturing, warehouses, healthcare assistance, home cleaning, agriculture, security, and research.

What technologies do AI robots rely on?

Typical components include sensors (cameras, lidar), perception models, planning/control algorithms, onboard computing, and sometimes cloud connectivity.

Are AI robots safe to use around people?

They can be safe when an **ai robot** is built with strong safeguards—like collision detection, strict speed and force limits, and thorough real-world testing—but the level of risk still depends on the specific task and the environment it operates in.

Will AI robots replace human jobs?

While an **ai robot** can automate certain tasks, it also opens the door to new jobs in areas like design, maintenance, supervision, and system integration—though the overall impact still depends on the industry.

📢 Looking for more info about ai robot? Follow Our Site for updates and tips!