An ai robot is often imagined as a humanoid helper that walks, talks, and performs chores, yet the real meaning is wider and more practical. In modern engineering, an ai robot is a physical system that senses the world, processes information, and acts with some degree of autonomy. The “robot” part provides the body: motors, wheels, arms, grippers, cameras, microphones, depth sensors, force sensors, and sometimes specialized tools. The “AI” part provides the brain: perception, planning, learning, and decision-making. Put together, they create a class of machines that can do more than repeat a fixed script. They can adjust to changing conditions, recognize objects, detect anomalies, and choose among multiple actions based on goals and constraints. This combination is why the term has become central across factories, hospitals, warehouses, farms, and homes. It also explains why the best results come from balancing intelligence with reliable mechanics, safety systems, and careful design rather than chasing a single “general intelligence” breakthrough.

Table of Contents

- My Personal Experience

- Understanding the Modern AI Robot: More Than a Machine

- Core Components: Sensors, Actuators, and the Intelligence Layer

- How Learning and Decision-Making Work in an AI Robot

- Types of AI Robot Systems You’ll Encounter Today

- Real-World Applications: From Factories to Homes

- Human-Robot Interaction: Safety, Trust, and Usability

- Ethics and Governance: Privacy, Bias, and Accountability

- Expert Insight

- Business Value: ROI, Productivity, and Operational Resilience

- Technical Challenges: Reliability, Data, and the Reality Gap

- Connectivity and Edge Computing: Where the Intelligence Lives

- Choosing and Deploying an AI Robot: Practical Considerations

- The Future of the AI Robot: What to Expect Next

- Watch the demonstration video

- Frequently Asked Questions

- Trusted External Sources

My Personal Experience

Last month at work we started using a small AI robot to help with inventory in our storage room, and I was skeptical at first because I pictured something clunky that would just get in the way. On the first day I walked alongside it with a tablet while it rolled down the aisles, scanning barcodes and calling out mismatches in a calm, slightly monotone voice. It actually caught a box we’d mislabeled weeks ago, which saved me from a frustrating end-of-day recount. The weirdest part was how quickly I began treating it like a coworker—I found myself saying “thanks” when it paused to let me pass. It’s not magic and it still gets confused when the floor is crowded, but it’s made the routine parts of my job quieter and a little less stressful.

Understanding the Modern AI Robot: More Than a Machine

An ai robot is often imagined as a humanoid helper that walks, talks, and performs chores, yet the real meaning is wider and more practical. In modern engineering, an ai robot is a physical system that senses the world, processes information, and acts with some degree of autonomy. The “robot” part provides the body: motors, wheels, arms, grippers, cameras, microphones, depth sensors, force sensors, and sometimes specialized tools. The “AI” part provides the brain: perception, planning, learning, and decision-making. Put together, they create a class of machines that can do more than repeat a fixed script. They can adjust to changing conditions, recognize objects, detect anomalies, and choose among multiple actions based on goals and constraints. This combination is why the term has become central across factories, hospitals, warehouses, farms, and homes. It also explains why the best results come from balancing intelligence with reliable mechanics, safety systems, and careful design rather than chasing a single “general intelligence” breakthrough.

What differentiates an ai robot from traditional automation is not merely speed or strength; it is adaptability. Classic industrial robots excel in structured environments where every motion is known in advance. An AI-driven robot can cope when the environment becomes less predictable: a box is placed slightly off-center, lighting changes, a human walks into the workspace, a shelf is partially empty, or the robot must identify a new product variant. That doesn’t mean every AI-enabled machine is fully autonomous or human-like. Many are collaborative systems that rely on human supervision, constrained operating zones, and fallback behaviors. The intelligence can be narrow but valuable, such as recognizing defects on a production line or navigating a warehouse without markers. Understanding these distinctions helps set realistic expectations and reveals why adoption is accelerating: the practical gains come from reducing brittleness, improving safety, and enabling robots to function in real-world conditions where perfect repetition is impossible.

Core Components: Sensors, Actuators, and the Intelligence Layer

Every ai robot rests on three foundational layers: sensing, actuation, and computation. Sensing translates physical reality into data. Cameras provide color imagery; stereo cameras and depth sensors infer distance; LiDAR measures ranges; IMUs detect motion; microphones capture audio cues; tactile sensors measure grip and contact; and force-torque sensors help a robot “feel” resistance when inserting parts or handling fragile items. The choice of sensors determines how well a robot can perceive its surroundings and how robustly it can operate when conditions change. For example, a warehouse robot may rely on LiDAR and odometry for navigation, while a surgical robot uses high-resolution imaging, precise encoders, and force feedback for fine control. Sensor fusion, the process of combining multiple sources, is a key technique that enables reliable perception even when one sensor is noisy or partially blocked.

Actuation is the capability to move and interact: electric motors, servo drives, pneumatic systems, hydraulic actuators, and specialized mechanisms like parallel grippers, suction cups, and compliant joints. The “body” is not just hardware; it includes safety-rated brakes, torque limits, collision detection, and mechanical compliance that reduces injury risk. The intelligence layer sits on top, running algorithms for perception, localization, mapping, planning, and control. Machine learning models might classify objects or estimate grasp points, while classical control loops ensure smooth motion. Many systems blend deterministic methods with learned components to achieve both predictability and flexibility. Importantly, an ai robot is only as good as the integration of these layers. A brilliant model is useless without accurate calibration, stable power, and a mechanical design that can repeat movements precisely. Conversely, world-class mechanics can underperform if the perception stack cannot handle real-world variability such as glare, dust, clutter, or changing inventory layouts.

How Learning and Decision-Making Work in an AI Robot

The intelligence in an ai robot is often built from a pipeline of tasks: perceive, interpret, decide, and act. Perception might include object detection, segmentation, pose estimation, and tracking—processes that turn pixels and sensor readings into meaningful entities like “a bottle on the table” or “a human approaching from the left.” Interpretation adds context: is the bottle full, is it the correct SKU, is the person an authorized worker, is the path blocked. Decision-making then selects an action based on goals and constraints. In a warehouse, the goal might be to pick items quickly while avoiding collisions and respecting no-go zones. In a hospital, it might be to deliver medications on schedule while yielding to staff and patients. Planning algorithms compute paths and motions, while control algorithms execute them smoothly. The “AI” portion can appear in any of these stages, but it is most visible in perception and in policies that choose actions under uncertainty.

Learning can happen in several ways. Supervised learning trains models on labeled examples, such as images of objects with bounding boxes. Self-supervised learning can leverage large amounts of unlabeled data to learn general visual or audio representations that later adapt to specific tasks. Reinforcement learning can teach behaviors through trial and error, often in simulation to reduce risk and cost, before transfer to the physical robot. Another approach is imitation learning, where a robot learns from demonstrations by humans. In practice, many deployments use a hybrid: a learned perception system, a rule-based safety layer, and a planner that is partly learned and partly hand-engineered for reliability. This layered approach is common because real-world robotics must handle edge cases safely. When a model is uncertain, an ai robot may slow down, request help, or switch to a conservative fallback behavior. Robust decision-making is not about always being bold; it is about managing uncertainty and keeping operations safe, predictable, and auditable.

Types of AI Robot Systems You’ll Encounter Today

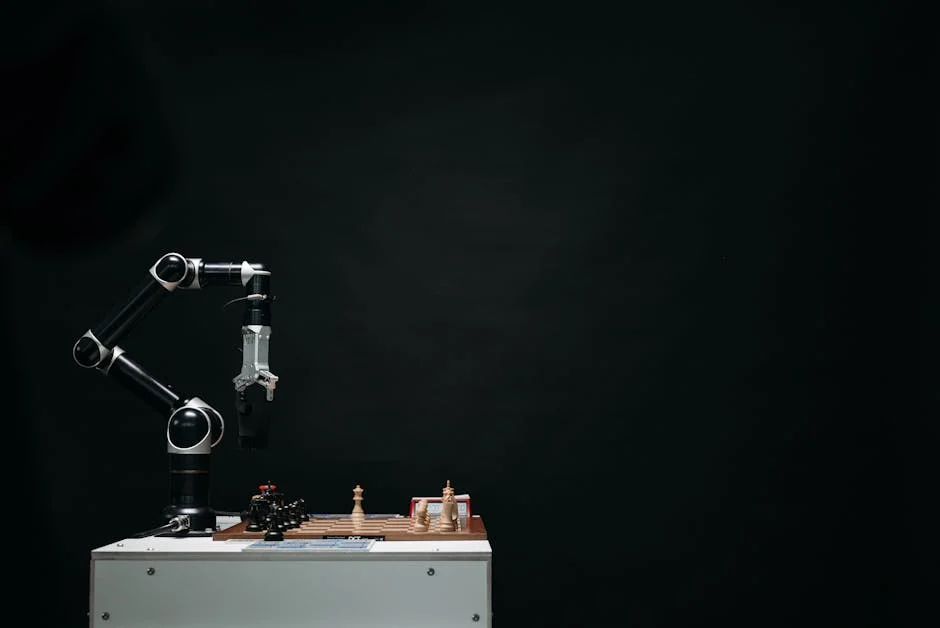

There is no single “standard” ai robot. Instead, there are families of robots designed for specific environments and tasks. Mobile robots navigate spaces: autonomous mobile robots (AMRs) in warehouses, delivery robots on sidewalks, and inspection robots in industrial plants. Manipulation robots handle objects: collaborative arms that work near people, high-speed pick-and-place robots for packaging, and bin-picking systems that locate and grasp items from clutter. Humanoid robots attract attention because their form factor fits human spaces, but many real deployments prioritize simpler shapes that are easier to engineer and maintain. Drones are also robots, using AI for stabilization, obstacle avoidance, mapping, and target tracking. Each type emphasizes different capabilities, and “AI” may be concentrated in navigation for mobile platforms or in vision and grasping for manipulation systems.

Service robots represent another category: cleaning robots for malls and airports, food preparation robots, and customer-assistance robots in retail. In healthcare, robots range from telepresence units to logistics carts to surgical assistance platforms. In agriculture, robots weed, harvest, and monitor crops using computer vision and predictive models. Security and inspection robots patrol facilities and detect anomalies such as overheating equipment or unauthorized entry. Even within a category, the level of autonomy varies. Some systems operate fully autonomously within a mapped area; others are semi-autonomous, with remote operators intervening when the robot encounters unusual situations. This “human-in-the-loop” model can be a practical stepping stone, enabling faster deployment while data is collected to improve autonomy. Understanding these types helps align expectations: an ai robot designed for warehouse picking is optimized for speed, grasp reliability, and integration with inventory systems, not for conversation or general household chores.

Real-World Applications: From Factories to Homes

Manufacturing remains a major driver of ai robot adoption, but the nature of factory automation is evolving. Traditional industrial robots excel at repetitive tasks like welding and painting in controlled cells. AI-enabled robots expand the range to include tasks that require perception and adaptation: sorting mixed parts, identifying defects, adjusting to small variations in assemblies, and collaborating with human workers. Quality inspection is a standout area, where vision models detect scratches, alignment issues, or missing components more consistently than manual checks. In warehouses and fulfillment centers, autonomous robots handle transport, picking assistance, palletizing, and inventory scanning. These systems reduce walking time for workers and improve throughput, especially during peak demand. The productivity gains come not only from speed but from better orchestration—robots can coordinate routes, prioritize urgent orders, and operate continuously with scheduled charging and maintenance.

Outside industrial settings, an ai robot is increasingly used for tasks that enhance safety, convenience, and access to services. Hospitals use delivery robots to move supplies, linens, and medications, reducing staff workload and limiting exposure in sensitive areas. In eldercare, robots can support monitoring, reminders, and mobility assistance, though these uses require careful attention to privacy and dignity. In retail, robots scan shelves for out-of-stock items and pricing errors, producing actionable reports. In agriculture, autonomous platforms detect weeds and apply targeted treatment, reducing chemical usage while improving yield. At home, robot vacuums and mops already demonstrate how a combination of mapping, obstacle detection, and scheduling can deliver daily value. The next wave of household robotics will depend on safer manipulation, better object recognition, and more reliable interaction in cluttered environments. Across all these settings, the most successful deployments are those with clear ROI, strong safety protocols, and an operating model that includes monitoring, maintenance, and continuous improvement.

Human-Robot Interaction: Safety, Trust, and Usability

For an ai robot to be useful in human environments, it must interact safely and predictably. Safety begins with physical design: rounded edges, compliant joints, torque limits, emergency stop buttons, and safety-rated sensors. It also includes software behaviors such as speed reduction near people, collision avoidance, and conservative motion planning. Collaborative robots, often called cobots, are designed to share workspace with humans, but collaboration is not automatic; it requires risk assessments, proper tooling, and well-defined tasks. A robot that moves unpredictably erodes trust, even if it is technically safe. Predictability—clear signals, consistent motion, and transparent status indicators—helps humans feel comfortable working alongside a machine. Sound cues, lights, and on-screen messages can communicate intent: turning, stopping, yielding, or requesting assistance.

Usability matters as much as intelligence. A highly capable ai robot can fail operationally if it is hard to configure, difficult to troubleshoot, or disruptive to existing workflows. Successful systems provide intuitive interfaces for mapping areas, defining tasks, and monitoring performance. They also offer clear diagnostics: low battery, sensor obstruction, wheel slip, network loss, or grasp failure. Training staff to work with robots is part of the adoption process, and the best deployments treat this as change management rather than a one-time installation. Trust grows when the robot behaves consistently and when people understand its limitations. If a robot occasionally asks for help, that can be acceptable—sometimes preferable—if the request is timely and the handoff is smooth. The goal is not to replace human judgment; it is to augment human capability and reduce repetitive strain, risky exposure, and wasted time. When the interaction design is strong, a robot becomes a dependable coworker rather than an unpredictable gadget.

Ethics and Governance: Privacy, Bias, and Accountability

An ai robot often operates where sensitive information exists: hospitals, homes, workplaces, and public spaces. Cameras and microphones can capture personal data, and location tracking can reveal routines. Ethical deployment requires privacy-by-design principles: collect only what is necessary, process data locally when possible, secure transmission and storage, and provide clear retention policies. Consent and transparency are essential, especially in environments where people may not have a choice about being near the robot. Visual indicators that recording is active, signage in public areas, and strict access controls for logs can reduce risk. A robot that maps a facility may inadvertently capture confidential layouts or proprietary processes. Governance must address who can access maps, sensor feeds, and operational analytics, and how long they are kept.

| AI Robot Type | Primary Use | Key Strengths | Common Limitations |

|---|---|---|---|

| Industrial AI Robot | Manufacturing, assembly, welding, quality inspection | High precision and repeatability; 24/7 operation; improved defect detection with vision AI | Less flexible outside trained tasks; integration cost; safety requirements in shared spaces |

| Service AI Robot | Customer support, delivery, cleaning, hospitality, healthcare assistance | Handles routine tasks; improves response times; can navigate semi-structured environments | Navigation and interaction failures in crowded/complex settings; privacy concerns; limited dexterity |

| Personal/Home AI Robot | Companionship, home monitoring, basic chores, education/entertainment | User-friendly interfaces; personalization via learning; smart-home integration | Limited physical capabilities; reliance on connectivity/cloud; data security and ongoing maintenance |

Expert Insight

Define a single, measurable job for the robot (e.g., “pick and place 20 parts per minute with <1% mispicks”) and map the full workflow around it—inputs, handoffs, safety zones, and exception handling—before buying add-ons or expanding scope. If you’re looking for ai robot, this is your best choice.

Start with a two-week pilot and track three metrics daily: uptime, error rate, and time-to-recover after a fault. Use the results to refine fixtures, lighting, and maintenance routines, then standardize the winning setup with a simple checklist for operators. If you’re looking for ai robot, this is your best choice.

Bias and fairness can also appear, particularly when robots use vision or audio models trained on limited datasets. If a system performs better in certain lighting conditions, on certain skin tones, or with certain accents, it can create unequal outcomes. In a security context, misidentification risks can be serious. Accountability is another challenge: when a robot causes damage or injury, responsibility may be shared across manufacturers, integrators, operators, and site managers. Clear audit trails, version control for models, incident reporting, and safety certifications help establish accountability. Ethical practice includes rigorous testing, ongoing monitoring for drift, and a willingness to pause or roll back features that behave unpredictably. The most responsible organizations treat AI robotics as a socio-technical system: performance, safety, and fairness depend on data, environment, training, policies, and human oversight, not just on code.

Business Value: ROI, Productivity, and Operational Resilience

Organizations adopt an ai robot when it can reliably improve performance metrics such as throughput, quality, safety, or cost. The value often comes from removing bottlenecks: reducing manual walking in warehouses, speeding up inspection, increasing uptime through predictive maintenance, or enabling 24/7 operations in controlled settings. Another major benefit is consistency. Robots do not tire, and AI-based inspection can apply the same standards on every unit. That consistency can reduce returns, improve customer satisfaction, and support compliance in regulated industries. However, ROI calculations must be realistic. Costs include hardware, integration, training, maintenance, spare parts, software licensing, and ongoing support. There may also be facility modifications such as improved Wi-Fi coverage, charging stations, safety barriers, or changes to storage layouts.

Operational resilience is an increasingly important driver. Labor shortages, demand spikes, and supply chain disruptions push organizations to seek flexible automation. AI-enabled robots can be redeployed to new tasks more easily than fixed automation, especially when software updates and modular tooling are part of the design. A mobile robot fleet can scale by adding units and updating routing policies. A robotic arm with vision can handle new packaging formats with retraining and calibration rather than a full mechanical redesign. Still, resilience requires planning: spare units, robust maintenance schedules, and clear procedures for when a robot fails mid-task. The best business outcomes come when robotics is integrated with existing systems—warehouse management, manufacturing execution, asset tracking, and scheduling—so that the robot is not an isolated tool but part of a coordinated operation. When implemented thoughtfully, an ai robot can deliver measurable gains while also improving working conditions by reducing repetitive strain and exposure to hazardous environments.

Technical Challenges: Reliability, Data, and the Reality Gap

Despite rapid progress, building a dependable ai robot remains challenging because the physical world is messy. Lighting changes, reflective surfaces confuse sensors, dust and vibrations degrade performance, and cluttered scenes create occlusions. Objects vary in shape, material, and packaging, and humans behave unpredictably. Small errors compound: a slightly miscalibrated camera can lead to inaccurate grasping; a worn wheel can degrade localization; a delayed network connection can affect coordination. Reliability is not only about model accuracy; it is about end-to-end performance across sensors, mechanics, software, and environment. This is why field testing is so important and why many deployments proceed in phases: pilot, limited rollout, and then scale. Each phase reveals edge cases that lab testing missed.

Data is both a fuel and a constraint. Training robust perception models often requires diverse datasets that reflect real operating conditions: different seasons, lighting, floor textures, product variants, and human behaviors. Collecting and labeling data can be expensive, and privacy constraints may limit what can be stored. Simulation helps, but it introduces the “reality gap”: behaviors learned in simulation may not transfer perfectly to real hardware due to differences in friction, sensor noise, and unmodeled dynamics. Techniques like domain randomization, fine-tuning on real data, and careful system identification can reduce the gap, but they do not eliminate it. Another challenge is model drift: as environments change—new products, new layouts, new uniforms—performance can degrade. Continuous monitoring and periodic retraining become part of operations. A successful ai robot program treats deployment as an ongoing lifecycle, with updates, validation, and maintenance akin to managing a fleet of vehicles rather than installing a static piece of machinery.

Connectivity and Edge Computing: Where the Intelligence Lives

Deciding where an ai robot “thinks” is a practical engineering choice with big consequences. On-device or edge computing means the robot processes data locally, reducing latency and improving privacy. This is important for real-time control and for environments with unreliable connectivity. For example, obstacle avoidance and motion control typically run on the robot because milliseconds matter. Edge computing also helps keep sensitive sensor data within the facility, which can simplify compliance. The tradeoff is power consumption, heat, and hardware cost. High-performance GPUs or specialized AI accelerators add expense and require thermal management, especially in compact robots.

Cloud computing can complement the edge by handling heavier workloads: large-scale map building, fleet coordination, analytics, long-term storage, and model training. A hybrid approach is common: immediate decisions on the robot, strategic decisions in the cloud. Fleet management benefits greatly from centralized optimization, where routes, task assignments, and charging schedules are computed across many units. Connectivity introduces security requirements: encrypted communication, authentication, secure boot, and patch management. A robot is a networked computer with wheels or arms, and vulnerabilities can have physical consequences. Organizations must manage updates carefully, ensuring that new software and model versions are validated before rollout. When connectivity and compute are designed well, an ai robot becomes easier to scale and improve over time, gaining new capabilities through updates while maintaining stable, safe core behaviors.

Choosing and Deploying an AI Robot: Practical Considerations

Selecting an ai robot starts with task definition and environment assessment. The most successful projects identify a narrow, high-impact workflow and measure it precisely: cycle time, error rates, labor hours, injury risk, and peak demand. The environment matters: floor quality for mobile robots, lighting for vision systems, object variability for picking, and safety requirements for shared workspaces. Integration requirements should be clarified early. A robot that cannot communicate with inventory systems, scheduling tools, or quality databases may create manual workarounds that erode ROI. It is also important to evaluate vendor support, spare parts availability, and the maturity of the software stack. A robot is not a one-time purchase; it is an ongoing relationship that includes updates, service, and performance tuning.

Deployment planning should include safety certification, operator training, and clear escalation paths. If the robot encounters an edge case, who responds, how quickly, and with what tools? Many organizations benefit from starting with a pilot that runs long enough to encounter real variability: shift changes, seasonal lighting, different operators, and routine disruptions. Metrics should be tracked continuously, not just at the beginning and end. Maintenance is another key factor: cleaning sensors, checking calibration, replacing wear parts, and monitoring battery health. A strong operational playbook makes the difference between a robot that quietly delivers value and one that becomes an unreliable novelty. When the workflow is chosen carefully and the rollout is managed like a production system, an ai robot can become a stable part of daily operations, improving performance while freeing people to focus on tasks that require judgment, creativity, and interpersonal skills.

The Future of the AI Robot: What to Expect Next

The next generation of ai robot systems will likely be defined by better generalization, safer manipulation, and more natural interaction. Advances in multimodal models—systems that combine vision, language, audio, and action—are making it easier for robots to interpret complex scenes and follow higher-level instructions. Improvements in tactile sensing and compliant actuation will help robots handle delicate objects, open packaging, and perform tasks that require subtle force control. Standardization will also play a role: common software frameworks, safety practices, and modular hardware can reduce integration cost and speed up deployment. At the same time, regulatory attention will increase as robots move into public spaces and sensitive environments, pushing the industry toward clearer accountability, stronger cybersecurity, and more transparent data practices.

Economic and societal factors will shape adoption as much as technical progress. Organizations will prioritize systems that deliver measurable value quickly, are easy to maintain, and can be redeployed as needs change. Workers will expect robots to improve safety and reduce drudgery rather than simply increase surveillance or workload pressure. Public acceptance will depend on visible safety, respectful design, and clear boundaries around data collection. Over time, the most common ai robot may not be a humanoid assistant but a fleet of specialized machines that quietly handle transport, inspection, cleaning, and basic manipulation in the background of daily life. The direction is clear: as sensing improves, compute becomes more efficient, and software becomes more robust, the ai robot will shift from novelty to infrastructure—an everyday tool that helps organizations and communities operate more safely, efficiently, and reliably.

Watch the demonstration video

In this video, you’ll discover how AI robots sense their surroundings, learn from data, and make decisions in real time. It explains the key technologies behind modern robotics—like computer vision, machine learning, and sensors—and shows practical examples of how AI robots are used in homes, hospitals, and workplaces, along with their benefits and challenges.

Summary

In summary, “ai robot” is a crucial topic that deserves thoughtful consideration. We hope this article has provided you with a comprehensive understanding to help you make better decisions.

Frequently Asked Questions

What is an AI robot?

An AI robot is a physical machine that uses artificial intelligence to perceive its environment, make decisions, and act autonomously or semi-autonomously.

How is an AI robot different from a regular robot?

Regular robots typically follow preprogrammed rules, while AI robots can learn from data, adapt to new situations, and handle variability with perception and reasoning.

What are common uses of AI robots today?

They’re used in manufacturing, warehouses, healthcare assistance, home cleaning, delivery, agriculture, security patrol, and exploration in hazardous environments.

What technologies make AI robots work?

Key components include sensors (cameras, lidar), computer vision, machine learning, planning/control algorithms, mapping (SLAM), and onboard or cloud computing.

Are AI robots safe to use around people?

With the right mix of sensors, built-in fail-safes, strict speed and force limits, and thorough testing, an **ai robot** can be very safe to use—but real safety always comes down to the specific machine and the environment it’s operating in.

What are the main limitations of AI robots?

An **ai robot** can still face challenges in the real world: it may struggle with rare edge cases, need large amounts of data and careful tuning, run up against battery and hardware limits, and be costly to deploy and maintain over time.

📢 Looking for more info about ai robot? Follow Our Site for updates and tips!

Trusted External Sources

- First Biomimetic AI Robot From China Looks Shockingly Human

Feb 4, 2026 marked a turning point for humanoid machines. At a showcase in Shanghai, DroidUp unveiled Moya—an ultra-realistic, fully biomimetic embodied **ai robot** designed to move and respond with striking lifelike precision, signaling a new era of realism in robotics.

- How can i link an AI to my robot? – Reddit

ai robot: May 17, 2026 … My experience with ai is just creating a discord AI bot with some API’s. Where can i learn to create and link an AI to my robot? Would any AI api work?

- 1X | Home Robots

Based in Palo Alto, California, we’re an AI and robotics company building safe humanoid robots designed to handle everyday chores and provide personalized support—bringing the help of an **ai robot** into your home and routine.

- AI robot arm : r/robotics – Reddit

Apr 22, 2026 — Watch an **ai robot** arm respond in real time to hand tracking, mirroring your movements with precision. With remote access, the operator can control it from virtually anywhere, making it possible to handle tasks from far away as if you were right there.

- 5in1 STEM AI Robot Toys Building Set, 478Pcs APP & Remote …

This 478-piece engineering erector block set lets kids build exciting models and bring them to life using an app or remote control. Designed to make coding feel like play, it’s a hands-on STEM kit that helps children ages 6–8 (and older kids too) learn problem-solving and basic programming as they create their own **ai robot** creations. A fun, interactive choice for Christmas or birthday gifts for boys and girls ages 10–12.