Text to image AI is a category of generative artificial intelligence that creates pictures from written descriptions, turning language into visual output in seconds. The core idea is simple: you type a prompt such as “a cozy cabin in the snow at dusk, warm window light, cinematic lighting, ultra-detailed,” and the system synthesizes an image that matches the request. Under the hood, however, the process is complex. Modern generators learn patterns from massive datasets of images and associated text, building an internal representation of how words relate to shapes, colors, styles, and compositions. When you provide a prompt, the model predicts and refines pixels (or latent representations of pixels) until the final render aligns with the meaning and aesthetics implied by your text. This shift has changed how teams approach ideation, mockups, storyboards, product concepts, editorial illustrations, and social creatives. Instead of searching for the “closest” stock photo, creators can specify exactly what they need, including mood, era, camera angle, art medium, and brand palette.

Table of Contents

- My Personal Experience

- What Text to Image AI Really Is and Why It Matters

- How Text to Image AI Works: From Prompts to Pixels

- Key Benefits for Creators, Marketers, and Businesses

- Common Use Cases: From Concept Art to E-Commerce Visuals

- Choosing a Text to Image AI Tool: Practical Criteria

- Prompt Engineering Basics: Getting Better Images with Fewer Attempts

- Advanced Techniques: Image-to-Image, Inpainting, and Style Consistency

- Expert Insight

- Quality Control: How to Evaluate and Improve AI-Generated Images

- SEO and Content Marketing: Using AI Images Without Hurting Performance

- Ethics, Copyright, and Brand Safety Considerations

- Future Trends: Where Text to Image AI Is Headed

- Building a Sustainable Workflow with Text to Image AI

- Watch the demonstration video

- Frequently Asked Questions

My Personal Experience

I tried a text-to-image AI for the first time when I needed a quick visual for a small presentation at work and didn’t have the budget (or time) to hire an illustrator. I typed in a rough prompt—something like “a cozy neighborhood café at sunrise, watercolor style”—and the first results were honestly a little off: weird hands, mismatched shadows, and details that didn’t make sense. But after a few tweaks, like specifying the angle, lighting, and what to avoid, it started producing images that were surprisingly close to what I had in mind. What stuck with me was how it felt less like pressing a magic button and more like learning a new way to communicate—almost like giving directions to a very fast, very literal artist. I still wouldn’t call it perfect, but it saved me hours and made me think differently about how I sketch ideas before I commit to a final design. If you’re looking for text to image ai, this is your best choice.

What Text to Image AI Really Is and Why It Matters

Text to image AI is a category of generative artificial intelligence that creates pictures from written descriptions, turning language into visual output in seconds. The core idea is simple: you type a prompt such as “a cozy cabin in the snow at dusk, warm window light, cinematic lighting, ultra-detailed,” and the system synthesizes an image that matches the request. Under the hood, however, the process is complex. Modern generators learn patterns from massive datasets of images and associated text, building an internal representation of how words relate to shapes, colors, styles, and compositions. When you provide a prompt, the model predicts and refines pixels (or latent representations of pixels) until the final render aligns with the meaning and aesthetics implied by your text. This shift has changed how teams approach ideation, mockups, storyboards, product concepts, editorial illustrations, and social creatives. Instead of searching for the “closest” stock photo, creators can specify exactly what they need, including mood, era, camera angle, art medium, and brand palette.

Beyond speed, the impact comes from accessibility and iteration. Before text-driven generation, high-quality visual production usually required specialized skills—photography, illustration, 3D modeling, or design software expertise—plus time and budget. With text to image AI, a marketer can explore a dozen campaign directions during a single meeting, a game developer can quickly test environment concepts, and a small business can generate on-brand visuals without a full studio pipeline. That doesn’t eliminate the need for human taste or craftsmanship; it changes where effort goes. The most valuable skills become prompt writing, art direction, curation, editing, and ethical judgment. As tools mature, the best results tend to come from a hybrid workflow: using AI to generate options and accelerate exploration, then refining outputs with traditional editing, compositing, typography, and brand governance. This combination is why text-conditioned image generation has become a practical production capability rather than a novelty.

How Text to Image AI Works: From Prompts to Pixels

Most leading text to image AI systems today rely on diffusion-based methods or related latent generative techniques. In diffusion, the model learns to reverse a noising process. During training, images are gradually corrupted with noise over many steps; the model learns how to remove that noise while being guided by text embeddings—numerical representations of your prompt. At generation time, the process starts from random noise and repeatedly denoises it, step by step, guided by the prompt so the emerging picture matches the described subject and style. Many generators operate in a latent space rather than raw pixel space, which speeds up rendering and improves consistency. A separate decoder then converts the latent representation into a visible image. This is why prompt wording can influence not just subject matter but also lighting, lens effects, depth of field, and illustration style: the model has learned statistical relationships between those concepts and visual traits across its training data.

Prompt adherence depends on several factors: the model’s training distribution, its text encoder quality, sampling settings, and how well your instruction disambiguates the scene. If a prompt includes multiple subjects (“a corgi wearing sunglasses riding a skateboard in Tokyo at night, neon reflections, rain”), the system must allocate attention to each element. Some tools provide controls like guidance scale (how strongly the prompt influences the image), steps (how many denoising iterations), seed (a reproducible random starting point), aspect ratio, and negative prompts (things you explicitly want to avoid, such as “no text, no watermark, no extra limbs”). Understanding these controls helps produce reliable outcomes and reduces the trial-and-error cycle. Even with excellent settings, text to image AI can struggle with fine details like legible typography, hands, precise logos, or exact product geometry—areas where post-editing or specialized models are often necessary.

Key Benefits for Creators, Marketers, and Businesses

Text to image AI delivers immediate value through speed, breadth of exploration, and cost efficiency. For marketing and branding teams, it enables rapid concept development: hero images for landing pages, ad variations for A/B testing, seasonal campaign visuals, and social media graphics that match a specific vibe. Designers can generate mood boards in minutes, exploring color palettes and art directions without sourcing dozens of references. Product teams can visualize early-stage ideas for packaging, user interfaces in context, or lifestyle scenes that suggest how an item fits into a customer’s day. For content creators, it provides custom thumbnails, blog illustrations, and background scenes that can be tuned to a niche audience. The biggest advantage is iteration: you can change one variable—lighting, camera angle, setting, or style—and instantly see alternatives, which supports better decision-making before money is spent on final production.

Another major benefit is personalization at scale. Traditional creative production often forces a tradeoff between customization and budget. With text to image AI, a brand can produce localized visuals for different regions, seasons, and audience segments while maintaining a consistent art direction. For example, a travel company can generate destination-specific imagery that matches its signature color grading and composition. An e-commerce store can create lifestyle scenes that reflect different demographics and environments without organizing multiple photoshoots. This does not mean replacing photographers or illustrators; rather, it can reduce the number of exploratory shoots and focus professional effort on the highest-impact assets. When integrated into a workflow with human review, brand guidelines, and legal checks, generative imagery can expand creative capacity and shorten timelines without sacrificing quality.

Common Use Cases: From Concept Art to E-Commerce Visuals

The range of applications for text to image AI is broad because any scenario that benefits from fast visualization can leverage it. In entertainment and game development, it’s used for concept art, environment exploration, character mood studies, and storyboarding. Writers and directors can test the look of a scene before committing to a production design. In publishing and editorial, it can produce spot illustrations, abstract metaphors, and cover concept drafts. In education, instructors can generate custom diagrams or historical scenes to support lessons, provided they are careful about accuracy and bias. In UX and product design, teams can create contextual mockups—devices on desks, hands holding products, or interfaces displayed in real-world settings—helping stakeholders visualize use cases early.

For commerce, text to image AI can assist with lifestyle imagery, seasonal banners, and product-in-context scenes. A brand selling coffee equipment might generate “morning kitchen counter” scenes in multiple styles: Scandinavian minimalism, rustic farmhouse, modern industrial, or cozy vintage. Even if the final image must be a real photograph for compliance or authenticity, AI can be used to pitch concepts, plan shoots, and align stakeholders on an art direction. Real estate and hospitality can create conceptual staging ideas or renovation mood boards. Nonprofits can produce campaign visuals that communicate themes without relying on potentially sensitive real-world photography. Across these use cases, the best outcomes come when teams treat AI outputs as drafts and design elements—subject to review, refinement, and alignment with brand and ethical standards.

Choosing a Text to Image AI Tool: Practical Criteria

Selecting a text to image AI platform depends on quality, control, licensing, privacy, and workflow fit. Quality includes realism, style range, and how well the system follows complex prompts. Control includes aspect ratios, seeds, negative prompts, inpainting (editing a selected area), outpainting (extending the canvas), and image-to-image options (using a reference image to guide composition). Some tools offer “style presets” that can speed up consistent output, while others provide advanced parameters for fine tuning. If a team needs predictable, repeatable results—like a series of images for a campaign—seed control and consistent model versions matter. Without versioning, a prompt that worked last month might produce different results after a model update.

Licensing and legal terms are equally important. Different providers offer different rights for commercial use, and restrictions may apply to sensitive categories. Businesses should review whether generated images can be used in ads, on product packaging, or as part of a trademarked brand identity. Privacy also matters: if prompts include confidential product details, you may prefer a tool with strong data policies, enterprise agreements, or local deployment options. Workflow integration can be decisive—teams may need API access, collaboration features, or compatibility with design software. The most effective approach is to test a short list of tools using the same prompt set, then compare results for fidelity, consistency, speed, and how much manual cleanup is required. That evaluation reveals the true production cost of each option. If you’re looking for text to image ai, this is your best choice.

Prompt Engineering Basics: Getting Better Images with Fewer Attempts

Successful prompting for text to image AI combines clear subject definition, compositional direction, and stylistic cues, while avoiding contradictions. Start with the core subject (“a ceramic mug of matcha latte”), then add environment (“on a wooden table near a window”), lighting (“soft morning light, gentle shadows”), camera framing (“35mm lens, shallow depth of field, close-up”), and style (“high-end product photography, natural color grading”). If you want an illustrated look, specify the medium (“watercolor,” “ink sketch,” “3D render,” “paper cutout collage”) and optionally reference art movements (“Art Nouveau,” “mid-century modern”) rather than living artists, which can reduce ethical concerns. When you need consistency across a series, keep a stable prompt template and only swap a few variables, such as product color or background setting.

Negative prompts and constraints can dramatically improve results. If you see unwanted artifacts—extra limbs, distorted faces, random text—add exclusions like “no text, no watermark, no logo, no deformed hands.” If the model keeps adding busy backgrounds, specify “clean background” or “minimal composition.” If the subject is consistently off-center, explicitly request “centered composition” or “rule of thirds” placement. For brand work, add color constraints (“palette of navy, cream, and gold”) and typography instructions only when you plan to add text later in a design tool, because AI-generated lettering is often unreliable. Finally, treat prompting as art direction: generate multiple candidates, pick the best, and refine with targeted edits. The fastest workflow often involves a few broad explorations, then a narrowed set of iterations where you adjust one parameter at a time to converge on the desired look. If you’re looking for text to image ai, this is your best choice.

Advanced Techniques: Image-to-Image, Inpainting, and Style Consistency

When pure text prompts don’t provide enough control, advanced features can help. Image-to-image generation uses a reference image—either a sketch, a photo, or a prior AI output—and transforms it while preserving key structure. This is useful for maintaining a specific pose, layout, or product silhouette. For example, if you have a rough composition for a banner, image-to-image can keep the layout stable while exploring different lighting styles or environments. Strength settings typically control how much the output deviates from the input: lower strength preserves more details, higher strength allows more creative changes. This technique is especially valuable for teams that need consistent framing across multiple assets, such as a set of product hero images with identical composition. If you’re looking for text to image ai, this is your best choice.

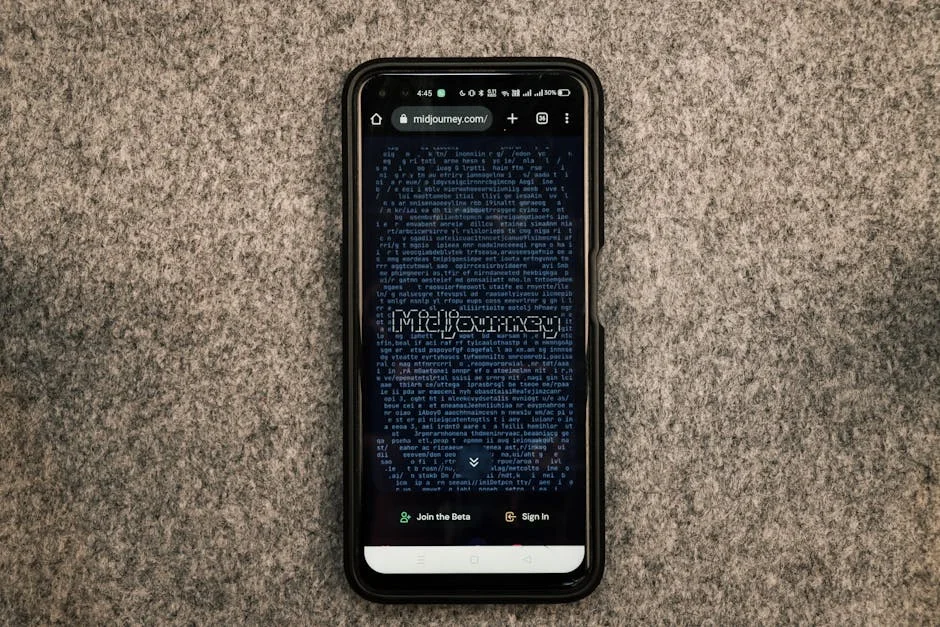

| Option | Best for | Strengths | Limitations |

|---|---|---|---|

| Midjourney | Stylized, high-aesthetic images | Strong artistic output, great composition and lighting, fast iteration via prompts | Less control over exact layouts/text, workflow often tied to Discord, can be inconsistent with fine details |

| DALL·E | General-purpose text-to-image and concept exploration | Good prompt understanding, solid variety of styles, convenient editing/outpainting options | May produce softer realism than specialized models, text rendering can be unreliable, occasional prompt sensitivity |

| Stable Diffusion | Customization and local/enterprise workflows | Open ecosystem, runs locally, extensive control (models, LoRAs, ControlNet), reproducible pipelines | Setup/compute required, quality varies by model, more tuning needed to match top-tier aesthetics |

Expert Insight

Write prompts like a brief: specify subject, setting, lighting, lens/style cues, and composition (e.g., “three-quarter view,” “shallow depth of field,” “rule of thirds”). Add 2–3 “must-have” details and 1–2 “avoid” notes (like “no text, no watermark”) to reduce unwanted elements. If you’re looking for text to image ai, this is your best choice.

Iterate with intent: change one variable at a time—camera angle, color palette, or material—then compare results side by side. Save the best prompt as a template and reuse it with swapped subjects to keep a consistent look across a series. If you’re looking for text to image ai, this is your best choice.

Inpainting and outpainting are production-friendly capabilities that turn text to image AI into an editing tool rather than a one-shot generator. Inpainting lets you mask a region—like a face, a hand, or a background object—and regenerate only that area with a prompt. It’s ideal for fixing artifacts, adjusting expressions, changing wardrobe colors, or removing unwanted elements. Outpainting extends an image beyond its original borders, useful for adapting a vertical image to a horizontal header or creating additional background space for typography. For style consistency across a brand, some platforms offer style references, “look” embeddings, or custom model training. While custom training can improve coherence for a recurring character or product line, it introduces governance needs: dataset curation, rights management, and ongoing evaluation to ensure the model doesn’t drift. A disciplined approach—locked prompts, controlled seeds, and a defined post-processing pipeline—usually delivers the most consistent results.

Quality Control: How to Evaluate and Improve AI-Generated Images

Quality control for text to image AI goes beyond “does it look cool.” For marketing and product contexts, evaluate realism, anatomy, perspective, lighting coherence, and brand alignment. Check for common issues: inconsistent shadows, impossible reflections, warped backgrounds, and subtle distortions in hands, teeth, jewelry, or product edges. Zoom in and inspect details, because artifacts can hide at thumbnail size and become obvious when used in print or large displays. If the image includes contextual elements like food, medical scenes, or safety equipment, verify that the depiction is plausible and not misleading. For regulated industries, misrepresentation can create compliance risks. Also consider cultural sensitivity: backgrounds, clothing, symbols, and implied narratives may carry unintended meanings across different audiences.

Improvement is often a combination of better prompts and targeted edits. If outputs are consistently missing a key attribute, move that attribute earlier in the prompt and simplify competing instructions. If the composition is cluttered, explicitly ask for a “minimal scene” and limit the number of objects. If faces look uncanny, try switching to a model tuned for portraits or request “natural skin texture, realistic proportions” while avoiding overly intense “hyper-real” keywords that can produce plastic textures. For product work, consider generating background plates separately from product renders, then compositing in a design tool for accuracy. Post-processing steps—color correction, sharpening, noise control, and artifact cleanup—can elevate an AI image from “good enough” to professional. A repeatable review checklist, plus a small library of proven prompt templates, helps teams maintain consistent standards as they scale production. If you’re looking for text to image ai, this is your best choice.

SEO and Content Marketing: Using AI Images Without Hurting Performance

Text to image AI can support content marketing when used thoughtfully, but it should not undermine page speed, accessibility, or trust. Large, unoptimized images can slow down Core Web Vitals, especially on mobile. Export in modern formats like WebP or AVIF, compress appropriately, and serve responsive sizes via srcset so browsers download only what they need. Use descriptive file names that reflect the topic and intent rather than generic exports. Alt text should describe what is visible and relevant to the page, not keyword-stuffed. If an image is decorative, consider empty alt attributes so screen readers aren’t burdened. When visuals convey important information, ensure the text alternative captures the meaning. These steps help search engines and users understand the content while keeping the experience fast and accessible.

Trust is another factor. Readers may react differently to AI-generated visuals depending on context. For editorial topics, overly synthetic imagery can feel less credible, while for creative inspiration it may be perfectly acceptable. Consider a consistent visual policy: use AI images for conceptual illustrations, backgrounds, and abstract themes, while relying on real photography for claims about real-world events, people, or products that require accuracy. If your brand values transparency, a subtle disclosure in an image credit line can help maintain trust without distracting from the content. Also avoid images that resemble recognizable individuals unless you have clear rights and consent, and avoid using AI to imitate proprietary styles in a way that could cause confusion. When integrated with strong design and performance practices, AI imagery can improve engagement metrics—time on page, scroll depth, and shareability—without compromising SEO fundamentals. If you’re looking for text to image ai, this is your best choice.

Ethics, Copyright, and Brand Safety Considerations

Using text to image AI responsibly requires attention to copyright, dataset provenance, and the risk of generating content that infringes on others’ rights. Policies vary by provider, and laws differ by jurisdiction, but practical risk management is universal. Avoid prompting for trademarked logos, brand mascots, or recognizable characters you don’t own. Be cautious with prompts that request a “style of” a living artist, particularly for commercial work, because it can raise ethical concerns and reputational risk. If you need a specific look, define it through descriptive attributes—color palette, brushwork, era, medium, composition—rather than direct emulation. For brands, it’s wise to develop internal guidelines: what categories are allowed, what requires review, and what is prohibited. This reduces the chance of accidentally generating sensitive or infringing imagery during fast-paced production.

Brand safety also includes avoiding harmful stereotypes and biased depictions. Because models learn from data that may include societal biases, outputs can reflect or amplify them—especially when prompts include roles, professions, or demographic descriptors. Teams should review images for representation, context, and unintended messaging. Another key area is misinformation: AI images can appear realistic enough to be mistaken for documentary photography. Using such visuals in news-like contexts can mislead audiences even if the text is accurate. Establish clear boundaries between illustrative and factual imagery. If your organization operates in healthcare, finance, or public policy, add an additional layer of review to ensure visuals do not imply guarantees, diagnoses, or outcomes. Responsible use is not just legal protection; it’s also a long-term trust strategy that keeps creative innovation aligned with audience expectations. If you’re looking for text to image ai, this is your best choice.

Future Trends: Where Text to Image AI Is Headed

Text to image AI is evolving toward greater controllability, higher fidelity, and deeper integration into creative software. One direction is precision: better handling of hands, faces, typography, and complex multi-object scenes. Another is control via structure—pose maps, depth maps, segmentation masks, and layout constraints that let creators lock composition while still benefiting from generative variety. Style consistency is improving through reference-based generation, allowing a brand to define a “house look” without retraining a full model. Multi-modal workflows are also expanding: text-to-image connects with image-to-video, 3D generation, and interactive design tools, enabling teams to move from a written brief to a full campaign concept with fewer handoffs. As these features mature, the role of the creator becomes more like a director—defining intent, constraints, and taste—while the system handles much of the rendering labor.

At the same time, governance and authenticity tools are likely to become more prominent. Watermarking, provenance metadata, and content credentials can help track how an image was created and edited, supporting transparency and reducing misuse. Enterprises will demand clearer licensing, auditable data policies, and secure deployment options. Expect more specialized models tuned for domains like fashion, architecture, industrial design, and medical illustration, where accuracy and controllability matter as much as aesthetics. Another trend is personalization: generating visuals that adapt to user preferences, language, and cultural context in real time. The challenge will be balancing personalization with consistency and brand integrity. As the ecosystem expands, success with text to image AI will depend less on chasing the newest model and more on building a reliable creative system: prompt libraries, review standards, ethical rules, and measurable performance outcomes.

Building a Sustainable Workflow with Text to Image AI

A sustainable workflow begins with clear objectives and constraints. Define what you need the images to do: increase click-through on ads, support a blog post with conceptual visuals, prototype packaging, or create social content at scale. Then define what the images must not do: misrepresent products, imply endorsements, include sensitive content, or violate brand guidelines. Create a small set of prompt templates aligned to your brand voice and visual identity—lighting preferences, color palette, composition rules, and acceptable styles. Keep a versioned record of prompts, seeds, and tool settings so successful outputs can be reproduced. This documentation turns text to image AI from an unpredictable experiment into a repeatable production process. It also helps new team members ramp up quickly and reduces dependency on a single “prompt expert.”

Next, build a review and editing pipeline. Treat AI outputs as raw material: select the best candidates, run them through quality checks, and refine them with design tools. Establish standards for resolution, aspect ratios, compression, and color profiles. If images will include text overlays, leave negative space intentionally or generate backgrounds separately and compose in a layout tool for cleaner typography. For commercial teams, add legal and brand review steps for high-visibility assets. Finally, measure performance. If AI-generated ad creatives outperform traditional visuals in certain segments, document what worked—prompt structure, style, subject framing—and incorporate those lessons into the next iteration. With a disciplined approach, text to image AI becomes a dependable creative partner that enhances human decision-making rather than replacing it, delivering consistent visuals that match goals, timelines, and audience expectations.

Watch the demonstration video

In this video, you’ll learn how text-to-image AI turns written prompts into original visuals, what makes a prompt effective, and how different settings influence style, detail, and realism. It also covers common use cases, limitations, and tips for getting consistent results—so you can create better images faster with the right words. If you’re looking for text to image ai, this is your best choice.

Summary

In summary, “text to image ai” is a crucial topic that deserves thoughtful consideration. We hope this article has provided you with a comprehensive understanding to help you make better decisions.

Frequently Asked Questions

What is text-to-image AI?

Text-to-image AI generates images from written prompts using trained machine-learning models.

How do I write a good prompt for text-to-image AI?

Specify subject, style, composition, lighting, colors, and camera details; add constraints like aspect ratio or realism level.

Why don’t the images match my prompt exactly?

Models interpret prompts probabilistically and may miss details; try clearer wording, fewer concepts, or iterative refinements.

What are common settings like steps, guidance, and seed?

Steps affect detail/time, guidance controls prompt adherence vs creativity, and seed enables repeatable variations.

Can I use text-to-image AI outputs commercially?

It depends on the tool’s license and your jurisdiction; review terms, model restrictions, and any trademark/copyright risks.

How can I improve image quality and consistency?

Use higher resolution or upscaling, consistent seeds and style cues, reference images if supported, and post-editing for fixes.

📢 Looking for more info about text to image ai? Follow Our Site for updates and tips!